A Glimpse into Indexfox

Indexfox

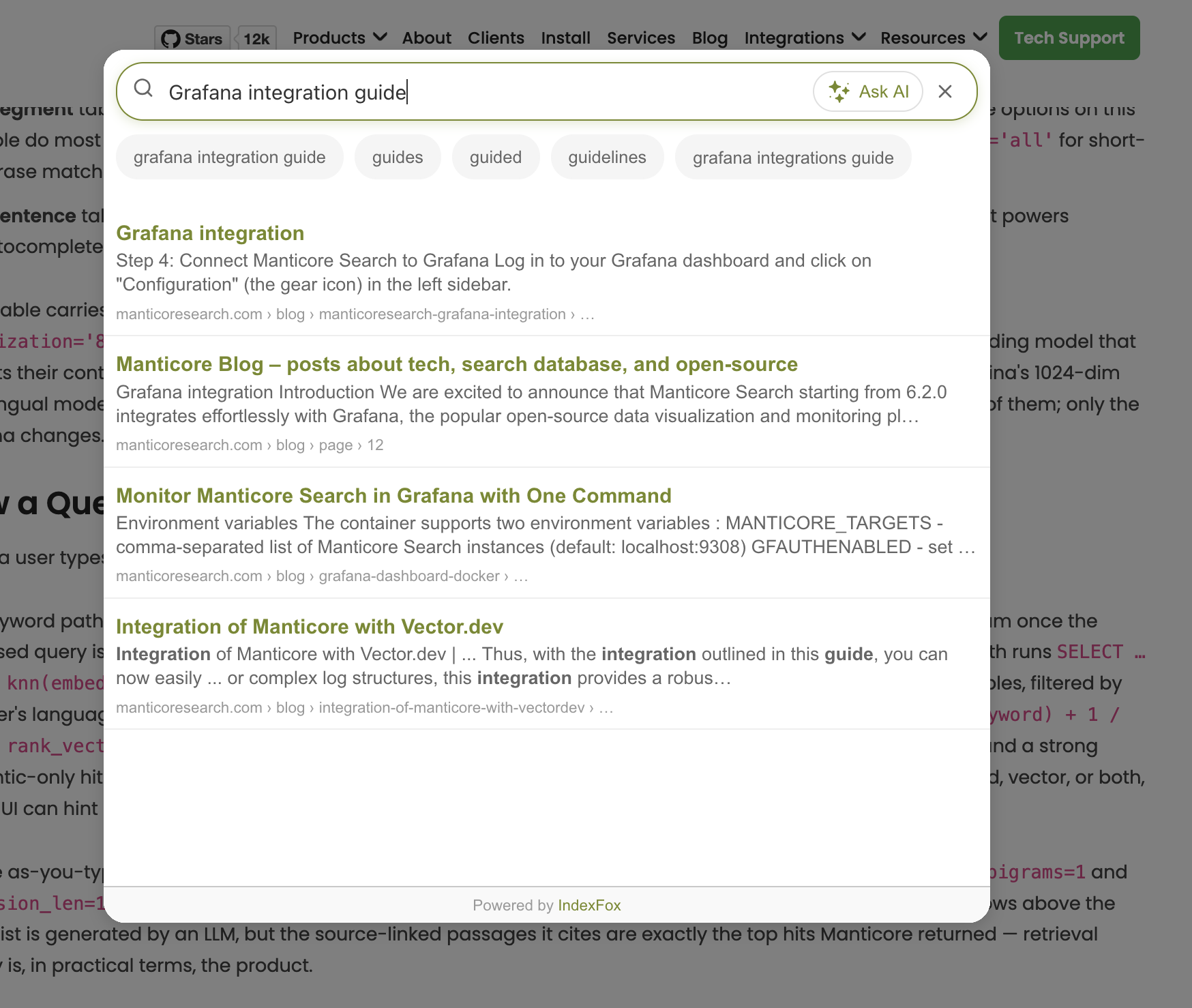

is a hosted AI search widget that any website can install with a single <script> tag. Its pitch is concise: drop the snippet into your <head>, and within a few minutes Indexfox crawls the site, indexes every page, and serves a search box that answers user questions directly — with source links — alongside the usual ranked list. The landing page sums it up as "AI search that finds, answers, and sells," and runs a live demo on apple.com so visitors can try the experience before signing up.

What makes Indexfox interesting from a search-engineering perspective is what its end users don't see. Behind the friendly fox mascot and the two-minute setup story is a hybrid search stack — keyword matching, vector retrieval, autocomplete, and answer generation — all served from a single Manticore Search instance. This post looks at how Indexfox uses Manticore under the hood, and why an open-source search engine turned out to be the right foundation for a multi-tenant SaaS.

The Problem with Site Search

Most websites still ship search that feels bolted on as an afterthought. The Indexfox landing page leads with a familiar story: a large fraction of users abandon a site after a single failed search, and on content-heavy or product-heavy sites that lost session is often a lost conversion. Classic full-text engines miss queries phrased in natural language; pure semantic search misses exact terms and product codes; running both engines side by side means two indexes to keep in sync and twice the operational surface.

Indexfox needed an engine that could run full-text and vector search in the same query, isolate one customer's data from another's without spinning up infrastructure per tenant, and survive constant re-indexing as the crawler keeps customers' content fresh — all on a budget that lets a small team support hundreds of sites.

Why Manticore Search

Indexfox settled on Manticore Search for three concrete reasons.

First, Manticore is a single open-source engine with first-class full-text and KNN vector indexes. There is no second datastore to provision, no glue code to keep a vector store and a keyword store consistent, and no licensing concern as the customer base grows. The same SQL-like dialect addresses both worlds.

Second, Manticore makes per-tenant isolation cheap. Indexfox models every customer site as its own set of tables — one for pages, one for content segments, one for sentences — created and torn down on demand. A new website added to the platform is, at the storage layer, simply a CREATE TABLE statement; an offboarding is a DROP TABLE.

Third, Manticore is operationally light. The backend talks to it over the HTTP/JSON API using the official manticoresearch-ts client. Real-time inserts land immediately, the engine runs on a single VM the same way Postgres does, and there is no JVM, no cluster, and no separate orchestration plane to babysit.

Three Layers of Meaning

Indexfox indexes each crawled site into three layered tables, all marked engine='columnar':

- A webpage table — one row per URL, with the title, description, raw HTML, language, and an averaged page-level embedding.

- A segment table — one row per logical content block (a heading and the paragraphs underneath it). Manticore options on this table do most of the linguistic heavy lifting:

morphology='lemmatize_en'for English stemming,bigram_index='all'for short-phrase matching,min_infix_len=2for partial-word recall, and a curatedstopwords='en'list. - A sentence table — one row per sentence, used both for fine-grained semantic recall and as the dictionary that powers autocomplete.

Every table carries a float_vector field configured with knn_type='hnsw', hnsw_similarity='cosine', and quantization='8bit'. Vector dimensions are dynamic per website, because each customer can pick the embedding model that best fits their content and budget — OpenAI's 1536-dim text-embedding-3-small, Voyage's 1024-dim voyage-3, Jina's 1024-dim multilingual model, or a 384-dim local sentence-transformer for cost-sensitive deployments. Manticore stores all of them; only the schema changes.

How a Query Actually Runs

When a user types into the Indexfox widget, the backend fans out across three Manticore features in parallel.

The keyword path runs SELECT … MATCH('…') against the segment and sentence tables, with proximity and quorum once the tokenised query is long enough, and Manticore's highlight() function annotating snippets directly. The vector path runs SELECT … WHERE knn(embedding, k, (?), { oversampling=3.0, rescore=1 }) against the HNSW graph in the same tables, filtered by the user's language. Both result sets are merged with Reciprocal Rank Fusion — the standard 1 / (60 + rank_keyword) + 1 / (60 + rank_vector) formula. A hit ranked highly by both paths floats to the top, while a strong keyword-only hit and a strong semantic-only hit each get their fair share of attention. Each result is annotated with whether it came from keyword, vector, or both, so the UI can hint at why a page appeared.

For the as-you-type box, the widget calls Manticore's CALL AUTOCOMPLETE against the sentence table with force_bigrams=1 and expansion_len=12, returning bigram-aware suggestions in well under a millisecond. The AI answer the widget shows above the result list is generated by an LLM, but the source-linked passages it cites are exactly the top hits Manticore returned — retrieval quality is, in practical terms, the product.

Customers see none of this. The integration on their side is the snippet that's been on the Indexfox landing page since launch:

<script>

(function(d, w) {

w.IndexfoxID = 'your-website-id';

var s = d.createElement('script');

s.async = true;

s.src = 'https://widget.indexfox.ai/indexfox.js';

if (d.head) d.head.appendChild(s);

})(document, window);

</script>

One tag in the page, one Manticore cluster on the other end.

Manticore Search Uses Indexfox Too

The search box at the top of

manticoresearch.com

is itself an Indexfox widget. Click the search input or press ⌘+K on any page on this site and you'll get the same hybrid keyword + semantic results, the same as-you-type bigram suggestions, and the same AI-generated answer with source links — pulling from Manticore's own blog, docs, and product pages. The integration is exactly the <script> snippet above, dropped into the site's layout template. Manticore powers Indexfox under the hood; Indexfox now powers search on Manticore's own site.

The Takeaway

Indexfox's bet was that the right open-source search engine could replace a Postgres-plus-pgvector-plus-Elasticsearch stack with one binary, and let a small team focus on crawler quality, embedding choice, and answer generation rather than on running infrastructure. Today every keystroke into the Indexfox widget — on the live demo at indexfox.ai and on every site that has installed it — is served by Manticore.